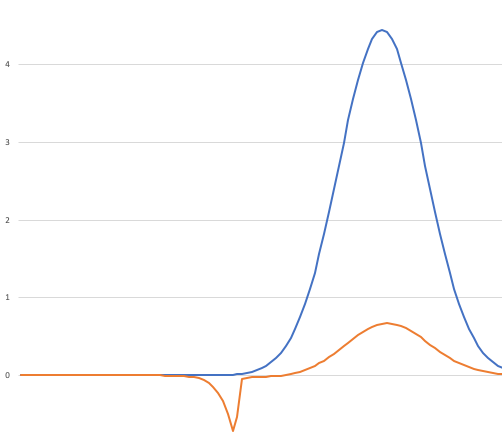

One more time! Suppose we have our nominal distribution p that possibly has “black swans” and our helper distribution q which has the same support, but no black swans.

We wish to compute the expected value of f when applied to samples from p, and we’ve seen that we can estimate it by computing the expected value of g:

x => f(x) * p.Weight(x) / q.Weight(x)

applied to samples of q.

Unfortunately, the last two times on FAIC we saw that the result will be wrong by a constant factor; the constant factor is the quotient of the normalization constants of q and p.

It seems like we’re stuck; it can be expensive or difficult to determine the normalization factor for an arbitrary distribution. We’ve created infrastructure for building weighted distributions and computing posteriors and all sorts of fun stuff, and none of it assumes that weights are normalized so that the area under the PDF is 1.0.

But… we don’t need to know the normalization factors. We never did, and every time I said we did, I lied to you. Because I am devious.

What do we really need to know? We need to know the quotient of two normalization constants. That is less information than knowing two normalization constants, and maybe there is a cheap way to compute that fraction.

Well, let’s play around with computing fractions of weights; our intuition is: maybe the quotient of the normalization constants is the average of the quotients of the weights. So let’s make a function and call it h:

x => p.Weight(x) / q.Weight(x)

What is the expected value of h when applied to samples drawn from q?

Well, we know that it could be computed by:

Area(x => h(x) * q.Weight(x)) / Area(q.Weight)

But do the algebra: that’s equal to

Area(p.Weight) / Area(q.Weight)

Which is the inverse of the quantity that we need, so we can just divide by it instead of multiplying!

Here’s our logic:

- We can estimate the expected value of

gon samples ofqby sampling. - We can estimate the expected value of

hon samples ofqby sampling. - The quotient of these two estimates is an estimate of the expected value of

fon samples ofp, which is what we’ve been after this whole time.

Whew!

Aside: I would be remiss if I did not point out that there is one additional restriction that we’ve got to put on helper distribution q : there must be no likely values of x in the support of q such that q.Weight(x) is tiny but p.Weight(x) is extremely large, because their quotient is then going to blow up huge if we happen to sample that value, and that’s going to wreck the average.

We can now actually implement some code that computes expected values using importance sampling and no quadrature. Let’s put the whole thing together, finally: (All the code can be found here.)

public static double ExpectedValueBySampling<T>(

this IDistribution<T> d,

Func<T, double> f,

int samples = 1000) =>

d.Samples().Take(samples).Select(f).Average();

public static double ExpectedValueByImportance(

this IWeightedDistribution<double> p,

Func<double, double> f,

double qOverP,

IWeightedDistribution<double> q,

int n = 1000) =>

qOverP * q.ExpectedValueBySampling(

x => f(x) * p.Weight(x) / q.Weight(x), n);

public static double ExpectedValueByImportance(

this IWeightedDistribution<double> p,

Func<double, double> f,

IWeightedDistribution<double> q,

int n = 1000)

{

var pOverQ = q.ExpectedValueBySampling(

x => p.Weight(x) / q.Weight(x), n);

return p.ExpectedValueByImportance(f, 1.0 / pOverQ, q, n);

}

Look at that; the signatures of the methods are longer than the method bodies! Basically there’s only four lines of code here. Obviously I’m omitting error handling and parameter checking and all that stuff that would be necessary in a robust implementation, but the point is: even though it took me six math-heavy episodes to justify why this is the correct code to write, actually writing the code to solve this problem is very straightforward.

Once we have that code, we can use importance sampling and never have to do any quadrature, even if we do not give the ratio of the two normalization constants:

var p = Normal.Distribution(0.75, 0.09);

double f(double x) => Atan(1000 * (x – .45)) * 20 – 31.2;

var u = StandardContinuousUniform.Distribution;

var expected = p.ExpectedValueByImportance(f, u);

Summing up:

- If we have two distributions

pandqwith the same support… - and a function

fthat we would like to evaluate on samples ofp… - and we want to estimate the average value of

f… - but

phas “black swans” andqdoes not, then: - we can still efficiently get an estimate by sampling

q - bonus: we can compute an estimate of the ratios of the normalization constants of

pandq - extra bonus: if we already know one of the normalization constants, we can compute an estimate of the other from the ratio.

Super; are we done?

In the last two episodes we pointed out that there are two problems: we don’t know the correction factor, and we don’t know how to pick a good q. We’ve only solved the first of those problems.

Next time on FAIC: We’ll dig into the problem of finding a good helper distribution q.