Scott Hanselman, the Microsoft VP for developer community outreach and general all around good guy was kind enough to ask me to be on his podcast, HanselMinutes, to talk about my upcoming book. I was delighted to spend a few minutes chatting with Scott about writing, the state of developer tools, and so on. If you want to check it out, use the link above, or the video embedded below, or look up HanselMinutes Wherever You Get Your Podcasts.

Book early-access discount code

Hey everyone, I first want to say a heartfelt thank you so much for the warm comments and supportive feedback I’ve gotten since I announced that I’m in process of writing Fabulous Adventures In Data Structures And Algorithms. It means a lot to me.

The book is in the Manning Early Access Program (MEAP) now; the first six chapters are up and we’ll be adding more over the coming months. The initial 50% off site-wide sale is over, but for the next ten days, until November 27th, using the code MLLippert at checkout will give you 50% off.

Thanks again; more soon!

I’m writing another book!

Hey everyone, I’ve finally turned this blog into a book! Or, rather, I am deep in the process of turning this blog into a book. Yes, it’s about 20 years, give or take, after I first thought of doing so, but who’s counting? The title is, of course:

Fabulous Adventures In Data Structures And Algorithms

The first few chapters are now available as part of the Manning Early Access Program (MEAP); the idea of this program is that you pay now and get access to the book as I finish writing it. I plan to finish by the spring of 2026. That way you get access early, and I get to test the code in production. 🙂 The source code is at:

https://github.com/ericlippert/FabulousAdventures.

Even better, everything is 50% off STARTING RIGHT NOW and going until November 13th, so go engage in commerce!

If you have questions or suggestions or any constructive feedback at all, you can leave it as a comment here or on Manning’s forum for book comments.

I was not expecting to write another book. Here’s how it happened:

Over my career I’ve been a technical editor for a lot of the programming book publishers as a fun hobby that pays for itself; last year Manning asked me if I was interested in helping edit a book not about programming, but rather a book about writing about programming, and of course I jumped at the chance. Cynthia Dunlop and Piotr Sarna wrote an insightful (and surprisingly funny) book; editing Writing For Developers was one of the most enjoyable side gigs I ever had.

After we got that book into production at the end of last year, the editor in charge of it asked me if I had any ideas for books about the current Microsoft developer ecosystem. Sadly, I’ve been out of that loop for more than a decade now, so I don’t have a good sense of what is current. Well then, is there any other kind of book that you think is missing from the current market?

I’m occasionally asked by development editors at various publishers if I’d write them yet another “review the data structures and algorithms that you learned in school, forgot about, and now need to brush up on your skills for an interview” book. I am not interested in that! Many of those books are very good. That there is a market demand for more of them is perhaps a symptom that the industry is still not great at interviewing. But I am not interested in writing another one.

You know, I said to the editor, if hypothetically I were to write another programming book, which I absolutely do not want to do, it would be:

(Cover mockup by Editor Jonathan.)

The title needs work but can you write me a proposal showing what the table of contents for that book would look like?

A clever editor used his rhetorical judo skills to make me write a book proposal, which then got shown to a bunch of editors and industry people some of whom I thought were my friends, and those jerks all recommended that Manning convince me to write the book. Which they did.

More seriously though: long-time readers of my blog know that expanding my personal toolbox of programming techniques was key to my career growth, and that it’s important to me to share those tools with others. This blog was one of the main ways I did that in the last two decades, and I am excited to have the opportunity to reach a new audience.

I’ve spent the last few months reflecting on old blog articles and thinking about the topics I never got around to writing about here, and it’s been a lot of fun getting back into writing after a long, long break. More on that later. For now, please check out the MEAP edition, and let me know what you think!

Bean Machine Retrospective, part 9

I wanted to implement concise “pattern matching” in Python, a language which unlike C#, F#, Scala, and so on, does not have any pattern matching built in. Logically a pattern is just a predicate: a function which takes a value and returns true if the value “matches” the pattern, false otherwise. The code for this episode is here.

When I embarked on this I realized two things. First, that it might be nice for debugging purposes to have more information in the return value than just “true” or “false”; in particular, when a complex pattern fails to match, but it should match, then I’ve made a mistake. In order to debug that mistake, I actually made patterns return a new type:

class MatchResult(ABC):

test: Any

submatches: Dict[str, "MatchResult"]

# ... and so on, boilerplate code for the rest of this.

A complex pattern might fail because of its component patterns failing to match, and so that information is included in the failure result. I also made subclasses Success and Fail.

You know I just realized this very moment that I’ve used “Fail” as a noun here. It should have been Failure. Oh well.

Second, I realized that using functions for everything would lack concision. I didn’t want to write code like this throughout:

is_binop = lambda x: Success(x) if isinstance(x, ast.BinOp) else Fail(x)

The notion of “is this thing a binop” is captured entirely by the type BinOp already; could we just use that object where a pattern is required?

After playing around with different options I eventually landed on this type discipline:

class PatternBase(ABC):

@abstractmethod

def match(self, test: Any) -> MatchResult:

pass

def __call__(self, test: Any) -> MatchResult:

return self.match(test)

Pattern = Union[PatternBase, int, str, float, type, list, None]

A pattern base object has a match predicate which takes a test value and, moreover, may be treated as a function you can call.

Anywhere that we need a pattern, the caller may provide:

- an instance of pattern base

- an None, integer, float or string value; the semantics are “the pattern matches if test is equal to the given value”.

- a type; the semantics are “the pattern matches if test is of the given type”

- a list of patterns; the semantics are “the pattern matches if test is a list of the same length, and each list element matches the corresponding pattern”

So far we can express patterns such as

p = [ BinOp, 3, None ]

# p matches a list of three items where the first is a binary op AST,

# the second is the number 3, and the third is the value None

But how would we express “match instances of Foo whose bar attribute is 3″? We can start by solving the more general problem of how do we match against multiple patterns? Here’s our first combinator:

def match_every(*patterns: Pattern) -> Pattern:

# ... the implementation is tedious to make it efficient,

# but you get the idea; we produce a pattern which succeeds if

# every given subpattern succeeds, and fails otherwise.

We can solve the specific problem of matching a subpattern that is an attribute of the tested value:

class AttributeSubpattern(PatternBase):

name: str

subpattern: Pattern

def match(self, test: Any) -> MatchResult:

submatch = match(self.subpattern, getattr(test, self.name, None))

submatches = {self.name: submatch}

if submatch.is_success():

return Success(test, submatches)

return Fail(test, submatches)

# ...

It’s a little clunky to call AttributeSubpattern so I made a synonym function attribute as well. Notice that this class is a combinator; it takes a pattern and produces a new pattern.

And now we can make a third combinator out of the first two: I want a pattern that checks to see if an object is of a particular type, and whether it has an attribute which matches a pattern:

def type_and_attributes(typ: type, patterns: Dict[str, Pattern]) -> Pattern:

t: List[Pattern] = [typ]

tuples: List[Pattern] = [

attribute(name, subpattern) for (name, subpattern) in patterns.items()

]

return match_every(*(t + tuples))

And now we can say

is_addition = type_and_attributes(BinOp, {"op": Add})

Or, even better, let’s make a pattern that always matches and yet another combinator:

class AnyPattern(PatternBase):

def match(self, test: Any) -> MatchResult:

return Success(test)

_any = AnyPattern()

def binop(

op: Pattern = _any,

left: Pattern = _any,

right: Pattern = _any) -> Pattern:

return type_and_attributes(

BinOp, {"op": op, "left": left, "right": right})

is_addition = binop(Add)

I also defined patterns or combinators for:

- Match at least one of these patterns

- Match the first item in a list to one pattern and the tail of the list to another

- Match at least one item in a list to a given pattern

- Match all items in a list to the same pattern

- Match the opposite of this pattern

- Helper combinators for commonly used AST nodes such as identifiers, operators, and so on.

- And so on; you get the idea.

With these tools in my toolbox I could then rapidly create patterns such as “is any item in this list not an identifier?”

any_non_id = ListAny(negate(name()))

Patterns identify what code needs to be transformed; rules transform them. Next time on FAIC we’ll look at building a set of rules and rule combinators for concisely representing AST transformations.

Bean Machine Retrospective, part 8

Before getting into the details of how my combinator-inspired source code transformation system works, I should say first, what is a general overview of the system? and second, why did I build it at all? In my experience, a typical compiler’s AST rewriter does not use a combinator-based approach. Roslyn for example used the visitor pattern almost exclusively to build rewriters, and that’s pretty common.

The basic overview is: we use a functional programming approach to AST transformation.

- A pattern represents a function from AST to bool. Is this an AST node that we’re looking to transform?

- A rule represents a “partial” function from AST to AST. By “partial” I mean that when given an AST, a rule is allowed to return the same AST, a different AST, or a failure code indicating that the rule does not apply to the given input.

- A combinator is a function that takes some number of rules and patterns and returns either a rule or a pattern.

That’s pretty highfalutin, I know. Next time we’ll look at some examples of patterns, and a concise way to represent the simplest ones.

Why choose a combinator approach for Beanstalk, the Bean-Machine-to-BMG compiler? I had a number of reasons:

- This approach gives a lot of concision for many rules and patterns. Simple code looks simple, is easy to read, and most important, it is easy to reason about its correctness. To be fair, this benefit is somewhat offset by making some of the more complicated rewrites a little tricky to understand, but I tried to limit those.

- Any portion of the rewriting logic can very easily be independently tested. If you want to test any predicate, any transformation rule, or any combination of them, it’s straightforward to create test cases.

- Experimenting with different rewriting strategies becomes trivial. For example, we’ll see later that a lot of the AST transformations work “top down” — that is, they transform the root node, and then transform the children, and so on, heading towards the leaves. Is a bottom-up rewriter more efficient, where we transform the leaves and move up to the root? In a conventional compiler, to change an algorithm from top-down to bottom-up might be a considerable rewrite but as we’ll see, changing a top-down traversal to a bottom-up traversal is changing a single function call.

- Moreover, exotic strategies become just as easily tested. “Apply the rewrites both top-down, and then again bottom-up, and keep going back and forth until a fixpoint is reached” would be easy, if we wanted to try that. The optimization combinator — “try these n different rewrites and then pick the one that minimizes some cost function” — is a combinator I did not end up writing but I certainly intended to.

- In my system transformation rules are not just functions, they are self-describing objects. This can lead to some interesting debugging benefits. For example, some rules are known to always succeed, and some combinators apply a rule until it fails. Passing a transformation that always succeeds to a combinator that runs it until it fails is obviously going to produce an infinite loop. But the combinator that runs its rule until failure can ask “are you one of the rules that is known to never fail?” and immediately assert, finding the bug early. (You can guess what motivated that feature!)

- It’s fun! I’ve always been intrigued by the possibilities of “nanopass” architectures for compilers and by rewriting languages such as Stratego. I did not want to take a dependency on Stratego (and it would have been inconvenient to do so from a Python-based compiler) but the core parts of the rewriter are quite straightforward to implement, so I jumped at the chance to finally put these ideas into practice.

Next time on FAIC: We’ll break down the problem into even smaller sub-problems, because we’re computer programmers, that’s what we do. How can we represent predicates about ASTs concisely in Python, and then compose them with combinators?

Bean Machine Retrospective, part 7

How do we write a compiler in a typical general-purpose line-of-business OO programming language such as Python, C#, Java, and so on? Compilers are programs, so we could make the question more general: how do we write programs?

The basic idea common to almost every widely used programming language is to use composition:

- Divide the problem into many sub-problems

- Write functions that each solve one or more sub-problems

- Compose a solution by writing functions that call other functions

The details of how those functions are organized varies from language to language of course; functions are stored in other functions, or in classes, or in modules, or whatever. But ultimately, most programs could be viewed as a composition of functions.

Now, we don’t simply call functions of course. Programming languages also have control flow, whereby they make decisions about what functions to call:

- Call foo() if and only if some predicate is true.

- Call foo() repeatedly until some predicate is false.

- Call foo() but branch to this catch block if it fails

- … and so on

We don’t normally think of control flow as a kind of function composition. What if we did? We can use ideas inspired by combinatory logic and functional programming to extract control flow into “combinators” and then use those to concisely build workflows to solve compiler problems.

A “parse tree” or abstract syntax tree (henceforth AST) is a data structure representing a syntactic analysis of a program. Over the next few episodes of this series we’ll explore the question of how a compiler writer might solve a common sub-problem in compiler design: how do we write an AST→ AST function using an approach inspired by combinatory logic?

Since Bean Machine and its compiler are both written in Python, we’ll use the very convenient parse tree types already provided by the Python ast module. It’s very straightforward. Every node in the tree has a type and zero or more labeled children. A child can be a value such as a string or number, or a node, depending on the label.

For example, if we had a statement “x = 2 + 3” then the AST for the right side of the assignment could be constructed like this (assuming that all members of the ast module are brought into scope.)

BinOp(

left=Num(n=2),

op=Add(),

right=Num(n=3))

The expression’s AST is a binary operator; it has three children, left, right and op. The left and right are literal numbers; their child n is the value of that number. You get the idea I’m sure.

Every Python expression, statement, and so on has an AST node, and there are standard implementations of both parsers and unparsers; you can turn text into ASTs, turn ASTs back into text, and compile and run that program.

Next time on FAIC: I’ll describe the patterns/rules/combinators system briefly, and then give some thoughts on what motivated this approach over a more conventional compiler technique such as visitor patterns for rewrites. Then we’ll start looking at examples of patterns and predicates.

The names of birds, part 4

The European starling is a lovely looking bird, though territorial, noisy and aggressive up close. Unfortunately, they are very invasive in North America. Most of the hundreds of millions of European starlings now living in the Americas can be found fighting over my suet feeder in winter months.

Last time we said that the kestrel obeys the identity Kxy=x for all birds x and y in the forest. The starling has a slightly more complicated identity: Sxyz=xz(yz).

Again, recall that this is parenthesized ((Sx)y)z=(xz)(yz).

Yes I know I used S for the “successor bird” in part 2; sorry for the confusion, this is a different bird.

If a forest contains both a starling and a kestrel, can you show that there is a bird in the forest which is fond of every bird in the forest? (Note that I’m implying here that there are at least two distinct birds in the forest, and therefore no lonely egocentric kestrel from last episode.)

Give it a try, and then scroll down.

.

.

.

.

.

.

.

Let’s call K to S; the starling calls back another bird. We’ll then call K to it. That calls back some other bird. We’ll then call any bird z in the forest to that bird.

Sxyz=(xz)(yz)

SKKz=(Kz)(Kz)

But Kz is a bird that is fixated on z, so no matter what we call to Kz, we always get z back:

SKKz=(Kz)(Kz)=z

We call SKK the identity bird I, because Iz=z for all z in the forest. Every value is a fixpoint of the identity function bird.

(Aside: Note that SKy names the identity bird for any bird y; we didn’t have to use K.)

Now that we know that any forest with both S and K in it also has I in it, can you prove that the forest also has M in it? (Recall that Mx=xx for all x.) Give it a try and then scroll down.

.

.

.

.

.

.

.

SIIz=(Iz)(Iz)=zz, so SII is M.

All this whimsey is fun of course, but I’m sure you’ve all realized by now that birds are more conventionally called combinators. Combinators are functions that take a single combinator and return a single combinator; forests are sets of combinators.

The study of combinatory logic is interesting for computer scientists. It turns out that there is a relatively straightforward way to take any expression made up of S and K and find an equivalent lambda calculus expression, and vice versa. Any expression in lambda calculus can be expressed as a string of S and K combinators. You can use the S and K combinators to express Booleans, numbers, arbitrary functions on integers, you name it; if a Turing machine can compute it, there’s an S/K expression which can be interpreted as computing the same thing.

There’s a lot more I could say about that, but I’m not going to go into the details.

Oh, and when I added lambdas to C# 3 way back in the day, the first test case I wrote to ensure the type analysis was working was something like:

delegate C C(C x);

...

C i = x=>x;

C m = x=>x(x);

C k = x=>y=>x;

C s = x=>y=>z=>x(z)(y(z));

but I’m not going to discuss that further, at least not right now. We’re already way off track from my series on compiling Bean Machine. Let’s get back to it.

Next time on FAIC: We’ll see how to use an approach inspired by combinators to build parts of a compiler, and why ensuring combinators have fixpoints is important.

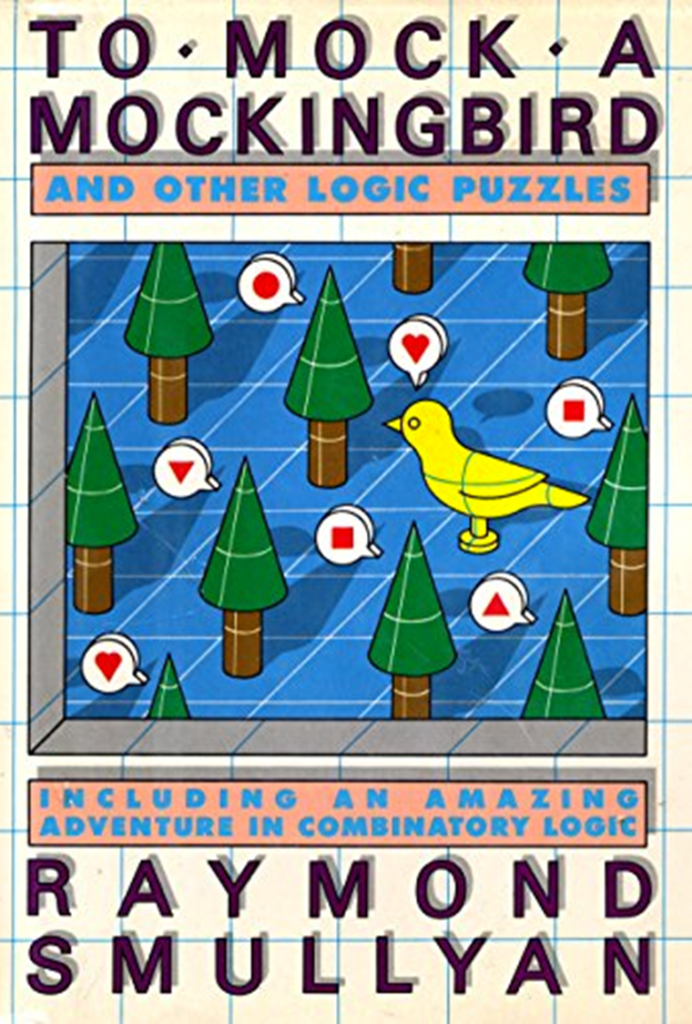

If you enjoyed these puzzles, the ones that I showed are some of the introductory puzzles in To Mock A Mockingbird, which is delightful and educational, and gets as far as computability theory and Godel incompleteness. Do pick up a copy!

The names of birds, part 3

In the autumn of last year my friend Joan and I went on a little trip up to the Skagit valley north of Seattle to photograph birds of prey; I managed to get a blurry but recognizable shot of this charming little kestrel looking for field mice. It was easily identifiable in the field because kestrels are one of the very few birds of prey that can briefly hover while hunting, almost like a hummingbird.

Well, back to combinatory logic and some puzzles from To Mock a Mockingbird.

We’ve said that if there are birds x and y such that xy=y, then x is fond of y, and y is a fixpoint of x. Smullyan goes on to say that if there are birds x and y such that xz=y for every z in the forest, then x is not just fond of y, it is fixated on y.

The kestrel, K, is an interesting bird. When you call the name of any bird x to it, it calls back the name of a bird in the forest that is fixated on x. That is, the kestrel obeys the identity Kxy=x for all birds x and y in the forest. (Recall from part one that Kxy means (Kx)y, not K(xy).)

Suppose there is a forest which contains a kestrel and moreover in this particular forest K is fond of itself; that is KK=K. Can you prove that a kestrel that is fond of itself is both (1) extremely egocentric, and (2) lonely? Give it a try, and then scroll down.

.

.

.

.

I need a longer lens! This is at the edge of what my little 70-300 zoom can handle.

.

.

.

(1) A bird which is fond of itself is already probably pretty egocentric. Kx gives the name of a bird which is fixated on x, so KK gives the name of a bird which is fixated on K. But KK=K, so K is the name of a bird that is fixated on K. A bird which is fixated on itself is surely extremely egocentric!

(2) By definition (Kx)y=x for all x and y. Since in this forest K is fixated on K, Kx=K for all x. Therefore Ky=x for all x, y. But again Ky=K for all y so K=x for all x in the forest. Every bird in the forest is the kestrel, and therefore there is only one bird in the forest. No wonder an extremely egocentric kestrel is lonely!

Next time on FAIC: The starling!

The names of birds, part 2

Reader “Joel” had an insightful comment on the first part of this series which I thought deserved a short episode of its own. Recall that we proved the theorem “if a compositional forest contains a mockingbird then every bird in the forest has a fixpoint“. An equivalent way to state that theorem is: “if a forest contains a bird without a fixpoint then either the forest is not compositional or it contains no mockingbird“. Joel’s question was whether there is a more “intuitive” way to understand why the mockingbird is so interesting that the presence of a bird without a fixpoint should prevent a mockingbird from existing in the same (compositional) forest.

The short version is: we’ll say that two birds agree on an input if their outputs are the same for that input. The mockingbird is a bird that agrees with every other bird on at least one input. A bird without a fixpoint is a bird that can always produce a different output than its input. Their composition is a bird which disagrees with every bird in the forest on one input and therefore is not in the forest at all.

The long version is:

I find it easiest to get an intuition for a general rule by looking at some simple examples.

The first thing I’d note is that it is quite easy to construct a non-compositional forest containing a bird without a fixpoint. A simple example would be a non-compositional forest containing exactly two birds, A and M. Define them such that:

AA = M

AM = A

MA = M

MM = M

Plainly these are two different birds, since their responses disagree on input M. Plainly M is a mockingbird, since Mx=xx for all x in the forest. And plainly A has no fixpoint. This forest is not compositional because there is no bird C in the forest such that Cx=A(Mx) for all x. With such a small number of birds in the forest it seems quite reasonable that one might have no fixpoint.

Let’s look at a more interesting example, this one in a compositional forest. Let’s suppose there is a countable infinity of birds in the forest. By “countable infinity” I mean that we can number each bird with a natural number; we’ve got bird 0, bird 1, bird 2, and so on. In fact, let’s just use natural numbers as the names of the birds in this forest.

Since each bird needs a response to hearing the name of every other bird in the forest, we can imagine an infinite lookup table that says what each bird responds when it hears the name of another bird in the forest. We’ll put the “called to” bird on the vertical axis and the “name called” on the horizontal axis:

0 1 2 3 ...

------------

0 | 9 5 2 5 ...

1 | 8 3 6 7 ...

2 | 0 1 2 3 ...

3 | 3 1 4 1 ...

... |

S | 1 2 3 4 ...

... |

So in our notation 0 1=5, 2 3=3, and so on.

Let’s suppose this forest is compositional and contains a bird, call it S, which has no fixpoint. We could choose any such S, but without loss of generality we shall pick a specific S, the bird where S 0=1, S 1=2, S 2=3, S 3=4, … and so on; the “successor” bird. Of course S has some “number” name but we don’t really care what it is, so we’ll just “alias” that one as S.

Now let’s suppose there’s a mockingbird in the forest and deduce a contradiction. The mockingbird tells us what is on the highlighted diagonal! That is, M 0=9, M 1=3, M 2=2, M 3=1, and so on. (As with S, M is an alias for some “number” name but we don’t know or care what it is.)

Now comes the fun bit. The forest is compositional, so therefore there exists a bird C such that Cx=S(Mx) for all x. We do care what the number name of C is. So, which is it?

C 0=10, and therefore C is not 0, because 0 0=9. But similarly, C 1=4, so C is not 1. And similarly C is not 2, 3, 4, 5, … because C definitely disagrees with each of those functions I mean birds at the diagonal. But if C has no number name, then it is not in the forest at all, contradicting the statement that the forest is compositional. We now have deduced a contradiction, and therefore our assumption that M is in the forest is shown to be false.

By looking at a specific example — a countable forest with a successor bird — perhaps you have a better intuition about why Mx=xx and any bird S that has no fixpoint cannot coexist in a compositional forest: if they did then the bird C such that Cx=S(Mx) definitely disagrees with every bird in the forest on at least one input, and therefore the forest would not be closed under composition; there would always be a missing bird.

Next time on FAIC: We’ll look at some more fun examples and then get back to compilation.

The names of birds, part 1

For the next part in my Bean Machine retrospective to make sense I’ll need to make a short digression. In looking back on the almost 20 years I’ve been blogging, it is surprising to me that I’ve only briefly alluded to my appreciation of combinatory logic. In the next couple of episodes, I’ll do a quick introduction based on the delightful book that introduced it to me: To Mock A Mockingbird, by the late Raymond Smullyan.

Imagine a forest containing some birds, possibly finitely or infinitely many. These are unusual birds. When you call the species name of a bird in the forest to a bird in the forest, it calls one back to you. Maybe the same, maybe different, but when you tell a bird the name of a bird, it names a bird back to you. There might be a Red Capped Cardinal in the forest and when you call out Great Blue Heron to it, it calls back Belted Kingfisher.

(Photos by me; click for higher resolution.)

We will notate “I called Q to P and got response R” as PQ = R. If I then called out S to R and R responded with T, we’ll notate that as PQS = RS = T. We’ll use parentheses in the obvious way: PQS = (PQ)S and this might be different from P(QS). The latter is “I called S to Q, and then called Q‘s response to P“. I’ll use capital letters to represent specific bird names, and small letters to represent variables.

The question we’re considering here is: under what circumstances will a bird call back the same name you called to it? That is, for a given bird y, under what circumstances does yx = x?

Smullyan calls birds with this relationship “fondness” — that is, “y is fond of x” means that yx = x. If y is fond of x then x is said to be a “fixpoint” of y.

A forest is said to be “compositional” if for every pair of birds (a, b) — a and b can be the same or different — there is a bird c in the forest such that cx = b(ax) for all x. That is, if we call any name x to a, and then call its response to b, we get the same result as simply calling x to c. c is the composition of “call x to a, and then call that response to b“.

A mockingbird is the bird M with the property that Mx = xx. That is, for any bird name x, M tells you what x‘s response is to its own name. (The attentive reader will note that we have not said what MM is, but at least we know from the definition that MM = MM, so give me some credit for consistency at least.)

Theorem: if a compositional forest contains a mockingbird then every bird in the forest is fond of at least one bird.

Proof: Try to prove it yourself; scroll down for the solution.

.

.

.

.

.

.

.

Let p be any bird. Let c be the bird that composes p with M. Therefore cx = p(Mx) for all x.

In particular, that’s true for c, so cc = p(Mc). But Mc = cc, thus cc = p(cc), and we’ve found a bird that p is fond of. Since p was any bird, every bird in the forest is fond of at least one bird. Or, put another way, every bird in a compositional forest with a mockingbird has at least one fixpoint.

Next time on FAIC: we’ll take a look at a few more interesting birds, and then discuss why this whimsy is relevant to compilation before getting back to Bean Machine.