Source code for this episode is here. I’ve added a panel to the UI that moves as the UI is resized; I’ll add some controls to it in future episodes.

Back in 1994 I made a photocopy of an article from the January issue of PC Techniques magazine; it was about the winner of a Life optimization contest for x86 programmers, and the algorithm in it was, and still is, nigh impenetrable. We will attempt to penetrate some of its secrets later on in this series. Back in 1994 I put it in my file folder of articles about Life to come back to later and then I did not for, well, I guess for 26 years. Where does the time go?

The article was the second of a series of three, and I always meant to look up the other two but never did. I believe the third was never written, but I was pleasantly astonished when researching this series to discover that the first two articles were not only available on the internet, but the markup source code of the articles is checked in to GitHub. You can read the two articles — not in markup — here; they have been reformatted into chapters of a book about optimization. I look forward to reading the whole thing; it looks fascinating.

They are long articles with lots of source code, so don’t worry if you don’t want to read it all; I’ll summarize the key points here and provide my own C# implementation of the algorithm described.

I never met Michael Abrash when I was at Microsoft, but of course he was well known as an optimization guru. Reading chapter 17 of this book now, 25 years later, I am powerfully struck by what is dated and what is timeless.

Of course the hardware details are extremely dated. In 1994 DOOM ran at a resolution of 320×200; a 200×200 Life board was considered large. The target machine for achieving acceptable performance in Abrash’s Life optimization was a 20MHz 486. A mere 26 years later I’m typing this on a mid-range 12 core 3.2GHz i7 with two 1920×1080 monitors, and (leaving aside Jeroen’s fun 16×16 proof-of-concept adventure) I would consider any Life grid of less than 256×256 at a bare minimum to be unreasonably small. Kids today don’t know how good they have it and should get off my lawn.

Similarly, “kids today” reading Abrash’s article might wonder why on Earth he is so concerned about “segments”, whatever those are; I encourage you to read up on segmented memory architectures and just imagine how much fun it is to live in a world where there are pointers of different sizes for different-sized allocations. A uniform 64 bit process-isolated virtual address space is a dream come true.

The seemingly very dated part though is the notion that writing a program in hand-optimized assembly is crucial for truly achieving maximum performance. The basic idea here is that humans know how to beat the compiler at its own game of translating their algorithms into optimized assembly.

Though undoubtedly there are still a few tricks and tips that assembly language experts can pull off from time to time, we are all better off with the attitude that if there’s a way to identify a pattern and wring some extra performance out of an optimization, then put that pattern in the compiler’s optimization pass. That way we all get it.

I want to be clear here that Abrash was absolutely correct in 1994. I could tell war stories; for example, in 1996 there was a bug in the MSVC compiler where it would de-optimize certain switch statements, and since the inner loop of the JScript engine was a switch statement over the bytecode opcodes, this was a major regression in script engine performance. When the VC team could not promise that they’d fix the bug before we shipped, we ended up writing a bunch of macros and assembler that re-optimized the switch statement. There were many other examples of code in Visual Basic, VBScript, JScript, OLE Automation, and other key components where we’d call into little hand-optimized assembly routines when the compiler couldn’t do a good enough job to meet our needs. When the Excel inner loop was rewritten from hand-optimized assembly into C, that was a big deal. And so on.

Why did I say seemingly above? Because this actually is not dated if we look at it more generally.

I’m sure that over reading this series, many readers have said to themselves “oh, I see how to improve this, let’s rewrite it in unsafe C# and use pointers to avoid memory safety checks…” Inline assembly is to C as unsafe code is to C#. Both are the ability to say “the relatively safe abstractions afforded by this language are preventing me from accessing the performance capabilities of the underlying unsafe implementation”. By taking the burden on ourselves of guaranteeing safety instead of relying on the compiler or runtime, we can bypass some safety checks that we “know” to be unnecessary. The problems arise, of course, when we are wrong!

But what I love about reading this article 26 years after I first intended to is seeing how much of that 1990s Microsoft performance culture I’ve internalized and how much is still relevant today:

- The underlying theme of this series: find the characteristics of your problem space that admit optimizations, rather than relying on improving raw speed at the margins.

- Get the algorithmic complexity understood first before you try to optimize, particularly if scaling is important.

- Know your target; what are the capabilities of the user’s hardware? What performance metrics are relevant to them? Don’t optimize the wrong thing, and don’t optimize for hardware no user has.

- Measure. Profile. Attack the biggest problem, and then measure again.

- Listen to your intuitions but be prepared to be proven wrong wrong wrong.

- Choosing the wrong abstraction can make it difficult to reason about performance and fix performance problems.

- You can often trade off between space and time; if you can afford more space then you can achieve less time, though beware of the fact that it takes time to fill that space.

- You can often trade off between generality and performance; sometimes you can solve a more restricted problem much faster. But even better, sometimes you can realize that a generalization leads you to a faster solution through an algorithmic improvement.

- Heavily optimized programs are confusing, hard to read, and hard to change, and hard to make even faster. Always try to make it right, then make it clear, and only then try to make it fast. A fast program that is wrong or is unclear, is just making future work harder.

I’m not going to take all of this advice in this series — I optimize plenty of stuff in my day job! — but it is a good summary of the sorts of things I want to keep in mind as we proceed with the next few episodes.

Aside: A commenter asked me in the previous episode what the point of all this optimization is; normally of course I am all about “know what metrics are important to your customer and measure those”, but I haven’t said what the goal state is other than “bigger and faster please”. That’s a great point and I should address it in a future episode; why exactly do we want to compute really large Life boards really fast? But the short answer is: there are interesting structures that have millions of living cells and take hundreds of thousands of ticks to run, and we cannot effectively explore those structures if the client is restricted to an 8-quad. More on this later!

Enough chitchat and reminiscing; what were Abrash’s observations of Life characteristics that lead us towards an improved algorithm?

- Because of the overcrowding rule, it is impossible for a majority of cells to be alive.

- I already observed this fact in an early episode when I pointed out that my display abstraction is “call me back with the locations of all the living cells in this grid subset”, but it is good to bring it up again because it is a key point in many Life algorithms.

- In fact, the most common kind of dead cell is the isolated dead cell — a dead cell surrounded by eight other dead cells.

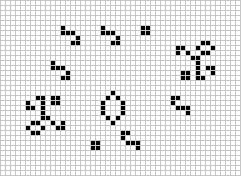

- If you watch a pattern like acorn run for a while you see that there are three kinds of living cells:

- The majority of living cells are in “still lifes” — ponds, beehives, and so on.

- There are very small number of cells in oscillators and gliders that change consistently.

- Then there are one or more “fronts” of destruction and creation as changing patterns overlap the still lifes and oscillators.

- The naive algorithm spends most of its time counting living neighbours.

- Still lifes away from the “fronts” and isolated dead cells do not change their neighbour counts from one tick to the next.

The most basic conclusion we can draw from these observations is: we could trade space off for time and store the neighbour counts along with the cell state; they almost never change, and if they don’t change, we don’t need to recompute them.

Moreover, we don’t even need to make a space tradeoff because I already had been using one byte per cell! In two previous episodes I discussed doing a computation where we add up a cell and all the living neighbours and then add on top of that the original cell multiplied by 8. But we could simply have a small variation on that as our basic data structure. We’ll declare that a cell is a byte where bits 0 through 3 are the neighbour count, and bit 4 is the state of the cell.

I like to start by making a thin abstraction over bytes that gives me the semantics I want. Those semantics are: current state, current neighbor count, increase and decrease the count:

struct Cell

{

private readonly byte cell;

public Cell(byte cell)

{

this.cell = cell;

}

private const int state = 4;

private const int statem = 1 << state;

private const int countm = 0xf;

public bool State => (cell & statem) != 0;

public int Count => cell & countm;

public bool AllDead => cell == 0;

public Cell MakeAlive() => new Cell((byte)(cell | statem));

public Cell MakeDead() => new Cell((byte)(cell & ~statem));

public Cell Increment() => new Cell((byte)(cell + 1));

public Cell Decrement() => new Cell((byte)(cell - 1));

}

}

Long time readers will surely know that I always want the bit twiddling to be out of the mainline code and in helper methods where it is nicely hidden. The jitter will, we hope, do a good job of inlining these operations.

Mutable structs are of course pure evil; even though we’re going to be using an array of these things and the array is a collection of mutable variables, so there would be no problem mutating a struct, I just can’t bring myself to do it.

We will go back to our naive algorithm roots and declare a two-d array of cells as our grid: (I’m skipping over the boring boilerplate; see the GItHub source code for details if you want it; I’ve also omitted the debug assertions and comments and whatnot.)

class Abrash : ILife

{

private const int height = 258;

private const int width = 258;

private Cell[,] cells;

We now have a bunch of work to do every time a cell becomes alive that was dead, or a cell dies that was alive; namely, the neighbour counts of its eight neighbours is now wrong. Let’s make a couple methods to help out with that. First, one that makes a dead cell become alive:

private void BecomeAlive(long x, long y)

{

// Make a dead cell come alive.

cells[x - 1, y - 1] = cells[x - 1, y - 1].Increment();

cells[x - 1, y] = cells[x - 1, y].Increment();

cells[x - 1, y + 1] = cells[x - 1, y + 1].Increment();

cells[x, y - 1] = cells[x, y - 1].Increment();

cells[x, y] = cells[x, y].MakeAlive();

cells[x, y + 1] = cells[x, y + 1].Increment();

cells[x + 1, y - 1] = cells[x + 1, y - 1].Increment();

cells[x + 1, y] = cells[x + 1, y].Increment();

cells[x + 1, y + 1] = cells[x + 1, y + 1].Increment();

}

And I won’t bore you with BecomeDead; it’s just the same but in the other direction.

Of course we still must ensure that we do not mutate the array as we are iterating over it, so let’s once again go back to our pre-naive-optimization days and just make a copy of the current state; we’ll iterate over the copy and use it to decide how to mutate the current state array. The algorithm is very straightforward:

public void Step()

{

Cell[,] clone = (Cell[,])cells.Clone();

for (int y = 1; y < height - 1; y += 1)

{

for (int x = 1; x < width - 1; x += 1)

{

Cell cell = clone[x, y];

if (cell.AllDead)

continue;

int count = cell.Count;

if (cell.State)

{

if (count != 2 && count != 3)

BecomeDead(x, y);

}

else if (count == 3)

BecomeAlive(x, y);

}

}

}

Notice that unlike Abrash’s implementation, I am not doing “wrap around” semantics. Rather, I’m never updating the edges; they will stay dead forever. This means that we can entirely skip doing any validity checking when updating neighbours, because every cell that can change has eight good neighbours.

However, I also bumped up the grid size to 258×258, so that we are still computing an entire 8-quad worth of cells. We can live with wasting a KB of memory here. (Of course back in the days of writing hand-optimized 80486 assembler it would be unattractive to bump up past a 64 KB buffer because now you need a long pointer to address all of it, and have I mentioned that I am glad to no longer have to remember the rules for segmented architectures?)

I’m doing this mostly to keep the code simple, but by not checking the edge conditions in theory we ought to get a slight speed improvement. However, recall that when I attempted the same “optimization” on the naïve algorithm, performance got very slightly worse so things are maybe not as easy to analyze as I’m making out here.

The “continue the loop if the cell is an isolated dead cell” check is not strictly speaking necessary; the algorithm is correct without it. But there are two things here. First, as I mentioned before, this is the common easy out. Dead cells with all dead neighbours is the most common case, and it is the cheapest to check, so check it as soon as possible so you can skip on to the next loop iteration.

Second, in Abrash’s original implementation, the operation “skip ahead in this loop over an array to the first non-zero byte” can be fairly easily identified by an optimizing C compiler and turned into a single x86 machine instruction; this optimization was common in optimizing compilers of that age and I mentioned a related optimization it in a previous episode. (The optimization is apparently no longer a win on modern hardware.)

What is the asymptotic complexity of this algorithm? Give that some thought and then scroll down.

.

.

.

.

.

.

.

.

If the number of cells in the grid is n, then we clone an array, which is O(n) cost. Then we do O(n) loop counter operations and O(n) comparisons to zero per step. The algorithm has to be at least O(n), and in fact it is O(n) still, in both time and space.

However, we can be a little bit more sophisticated here and notice that though there are always n operations total inside the loop, we can divide them into three buckets:

- Extremely cheap operations: continue if equal to zero is one of the cheapest things you can put into a loop. Since most cells are typically isolated dead cells, most cells fall into this bucket.

- Very cheap operations: fetch the state and the count, do some comparisons on them, and discover that the cell does not need to change. We do a little more work here, but of the cells which are not isolated dead cells, most of them are unchanging cells in a typical Life instance.

- Comparatively expensive operations: change a cell, update the eight neighbor counts.

Even though we have no asymptotic complexity win, we expect to get a win in raw performance here because we’ve gone from one comparatively expensive operation per cell — add up the eight neighbours — to one comparatively expensive operation per changed cell.

Without further ado, what is the raw performance here for 5000 iterations of acorn?

Algorithm time(ms) ticks size(quad) megacells/s

Naïve (Optimized): 4000 5K 8 82

Scholes 3250 5K 8 101

Frijters SIMD 1000 5M 4 1200

Abrash 550 5K 8 595

(Again, the comparison to the SIMD implementation is not really fair since the implementation given cannot scale beyond a tiny 4-quad but all the others can; I would love to see a SIMD implementation on an 8-quad for comparison so if someone wants to write one, please let me know in the comments.)

We’re almost 6x faster than our previous best algorithm that can do an 8-quad. This is an enormous improvement for what is really a very small change in the algorithm!

It comes at a cost, because we now have redundancy in our data structure that must be kept consistent for the algorithm to operate correctly, but that cost is certainly worth it.

What looks like potential issues here?

- Once again we are allocating short-lived, potentially large garbage on every tick

- We might experiment with using a 1-d array instead of a 2-d array; we could do a copy instead of a clone, and keep two arrays around instead of allocating a new one and throwing it away

- Do we accrue any penalty for array bounds checking?

And so on; we could go through the same process we did for the naive algorithm and make marginal improvements here, but I am eager to move on.

Are there any other performance wins that we’re missing? Yes, one. I mentioned on my big list above that choice of abstraction can drive performance, and here we have an example of that. My “draw the screen” abstraction assumes that we are drawing a new screen every time, it assumes that the screen is all dead by default, and it assumes that most cells will be dead on the region drawn, and therefore the abstraction is “call me back for every live cell in the region”. But this algorithm knows when a cell changed. If the abstraction was instead “the screen has not changed since the last redraw, just give me the changes” then screen drawing could get a performance win!

I said early on that I was not going to worry about screen drawing performance and I’m going to stick with that. But still it is relevant to notice that in this case my algorithm and my abstraction have a mismatch that prevents us from getting a performance win in a common case.

Next time on FAIC: I just said “this algorithm knows when a cell changed”. Can we use that insight to drive a further improvement?