I just thought of a really cute application of the stochastic workflow technology we’ve been working on; most of the series has already been written but it fits in here, so I’m going to insert this extra bonus episode. We’ll implement the zero value next time.

Code for this bonus episode is here.

You are probably familiar with the famous “Monty Hall” problem, but if not, here it is:

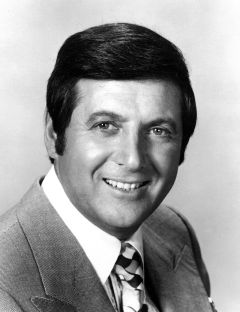

- You’re on a game show hosted by Monty Hall, the handsome Canadian fellow pictured above.

- Before you there are three closed doors; behind a uniformly randomly selected door there is a new car; behind the other two there is nothing.

- You get to choose a door, and you get what is behind it as a prize: either a car, or nothing.

- You randomly choose a door, again by some uniform process.

- Monty — who knows where the car is — now always opens a door that meets two criteria: it does not have the car behind it, and it is not the door you chose.

- To clarify: if you chose the door with the car, Monty chooses one of the remaining two doors by a uniform random choice. If you chose a door without the car, Monty only has one door he can open, and he opens that one.

- Monty gives you the opportunity to switch your choice to the other still-closed door.

- Assuming you wish to maximize your probability of winning the car, should you switch doors or stay with your original choice?

Aside: I’ve tried to be very precise in my description of the game for the purposes of our analysis. In the real game as played on television there were irrelevant details such as: the “prizes” behind the other two doors were goats or other bizarre, undesirable items, and so on. But there were also germane differences between the real game and our model above; for example, in the real game Monty would sometimes offer choices like “do you want to switch your door, or forget about the doors entirely and take the prize that is in this box?” and it is not clear by what process Monty decided to offer those choices. In this simplified version of the game I’ve removed all human agency from Monty; for our purposes, Monty is just a machine that is following an algorithm that involves generating random outcomes.

Exercise 1: If you don’t already know the solution, work it out. The answer is below.

.

.

.

.

.

You are two-to-one more likely to win the car if you switch than if you stay. But don’t take my word for it. Let’s solve the problem with computers, not by thinking!

Plainly the key to the problem is what is the distribution of Monty’s choice? Monty chooses a random door, but is observed to not pick a door with a car or the door which you picked. We can represent that as a two-parameter likelihood function:

IDiscreteDistribution<int> doors = SDU.Distribution(1, 3);

IDiscreteDistribution<int> Monty(int car, int you) =>

from m in doors

where m != car

where m != you

select m;

There’s no logical difficulty in adding more parameters to a likelihood function; think of the parameters as a tuple if that makes you feel better.

Now we can answer the question. Here’s the probability distribution of winning if you do not switch:

var noSwitch1 =

from car in doors

from you in doors

from monty in Monty(car, you)

select car == you ? “Win” : “Lose”;

Console.WriteLine(noSwitch1.ShowWeights());

And the output is:

Win:1 Lose:2

As predicted by thinking, you are twice as likely to lose if you do not switch. Computers for the win!

Exercise 2: Wait a minute, we never even used the value of range variable monty in the query. How is it possible that adding a from clause to the query changes its outcome when the sampled value is not even used?!?

Give this some thought and answer in the comments.

Exercise 3: OK smart person, if you thought that one was easy, take a look at this one.

We have our likelihood function Monty() which is just a query comprehension, and our noSwitch1 which is also just a query comprehension. We can make the program a little bit shorter by combining them together in the obvious way:

var noSwitch2 =

from car in doors

from you in doors

from monty in doors

where monty != car

where monty != you

select car == you ? “Win” : “Lose”;

And if we print out the weights of that one… uh oh.

Win:1 Lose:1

I would have thought this program fragment to be logically the same as before, but this gives weights of 1:1 when we know the correct answer is 1:2.

Where did I go wrong?

Again, answer in the comments.

Next time on FAIC: Let’s get back on track from this silly diversion! We were talking about the zero value, so let’s implement it.

Exercise 2: Adding the

from Montyclause doesn’t actually change the distribution. You’re picking one of three doors, so we should expect 1:2 odds of winning. I checked by commenting out that line, and we get the same result.Exercise 3: You didn’t go wrong at all! You just wrote code to answer a different question. The odds 1:1 represent the odds of winning, given that you switch. The odds of 1:2 are the odds of winning giving switching compared to winning given not switching.

Your answer to exercise 2 is spot on; I was being deliberately misleading when I implied that the distribution changes! (April fools woooooo!)

As is frequently the case when I’m being deliberately misleading, I marked the wrong bit with “plainly the key to the problem is…” 🙂

This to me illustrates very clearly the correct answer to the Monty Hall problem: the fact that Monty also chooses a door, and moreover, always chooses an empty door that is not your door, is irrelevant to the problem and does not change the fact that you had a 1/3 chance of picking the correct door in the first place. All Monty is telling you is something you already know: that one of the doors you didn’t pick is empty.

Put another way, we could equivalently describe the game as follows: you pick a door, Monty then offers you the opportunity to take whatever is behind *both doors that you did not pick*, and points out that one of them is empty. Knowing which one of them is empty should not change your analysis: that obviously “take what is behind two doors” is twice as good as “take what is behind one”.

For exercise 3, obviously you are right that the query answers an entirely different question. But I’m not following the logic of your explanation.

Well, to celebrate April Fools, here’s a small twist on this problem: let Monty uniformly chose from any doors you didn’t pick. What’s the probability of you winning if you don’t switch, conditioned by the event “Monty didn’t open the door with the car”?

Turns out, knowledge is power.

Let me make sure I understand the twist you are proposing. It is: the car is behind a random door. I choose a random door. Monty chooses a random door of the two that are left, and if he chooses a door with the car, we stop the game and start over. If he chooses a door without a car, he opens it and gives me the opportunity to switch.

If I understand correctly, this is basically the second workflow, and so it’s 50-50. That is:

* there is a 1/3 chance that I picked correctly, it doesn’t matter what Monty does, and I lose by switching

* there is a 1/3 chance that I picked wrong, Monty shows me an empty door, and I win by switching

* there is a 1/3 chance that I picked wrong, Monty shows me the door with the car, and we start all over again.

We discard the “start over”, and that leaves us with a ratio of 1/3 to 1/3, which is fifty-fifty.

Yep! And the funny (in my opinion, at least) thing is that in this case, you can use the usual “incorrect” solution to the original MH problem and it will actually be correct: three doors, the host discards one at random, two doors left, obviously the chances are 50-50.

In noSwitch2 you end up giving equal weight to each valid {car, you, monty} triple. This over weights the instances where you win by a factor of 2. In those cases, Monty has two choices since he can pick either of the remaining doors with equal probability. That means that each of those triples should have half the weight of a triple where Monty had no choice.

Exactly right!

This shows how maybe our using query comprehension syntax to express probabilistic workflows is cute, but misleading. With the sequence monad, the refactoring that I did preserves the semantics of the query; if we replaced “doors” with {1, 2, 3} and changed all the types to sequences instead of distributions, the results of the two queries would be the same. But that’s not the case for the distribution monad, for the reasons you point out.

We can do better than over-applying the query comprehension syntax; I’ll have some ideas for that in future episodes.

Some people who stubbornly cling to the wrong answer will think that this is an April Fool’s joke. 🙂

Hah! Good point.

To be clear, the only “April Fools joke” today is that I was deliberately misleading and obtuse in my description of the two queries.

Pingback: Fixing Random, part 18 | Fabulous adventures in coding

Pingback: Fixing Random, part 19 | Fabulous adventures in coding